codex-vision

A small Claude Code skill that lets Claude review screenshots, generate UI mocks, and edit images by routing them through OpenAI Codex's vision tools — three modes, one shell command per artifact, gated by a 50-case triggering eval.

The new image generation in OpenAI Codex is incredibly good. codex-vision is a tiny Claude Code skill (one bash wrapper + a SKILL.md) that wires it up so Claude can review a screenshot, generate a UI mock, or edit an image via Codex from one shell command — three modes: review · generate · edit.

npx skills add thenamangoyal/codex-vision -g -a claude-code -y

Drops the skill into ~/.claude/skills/codex-vision/. Needs the Codex CLI installed and authenticated once (codex login). More install variants ↓

One killer example

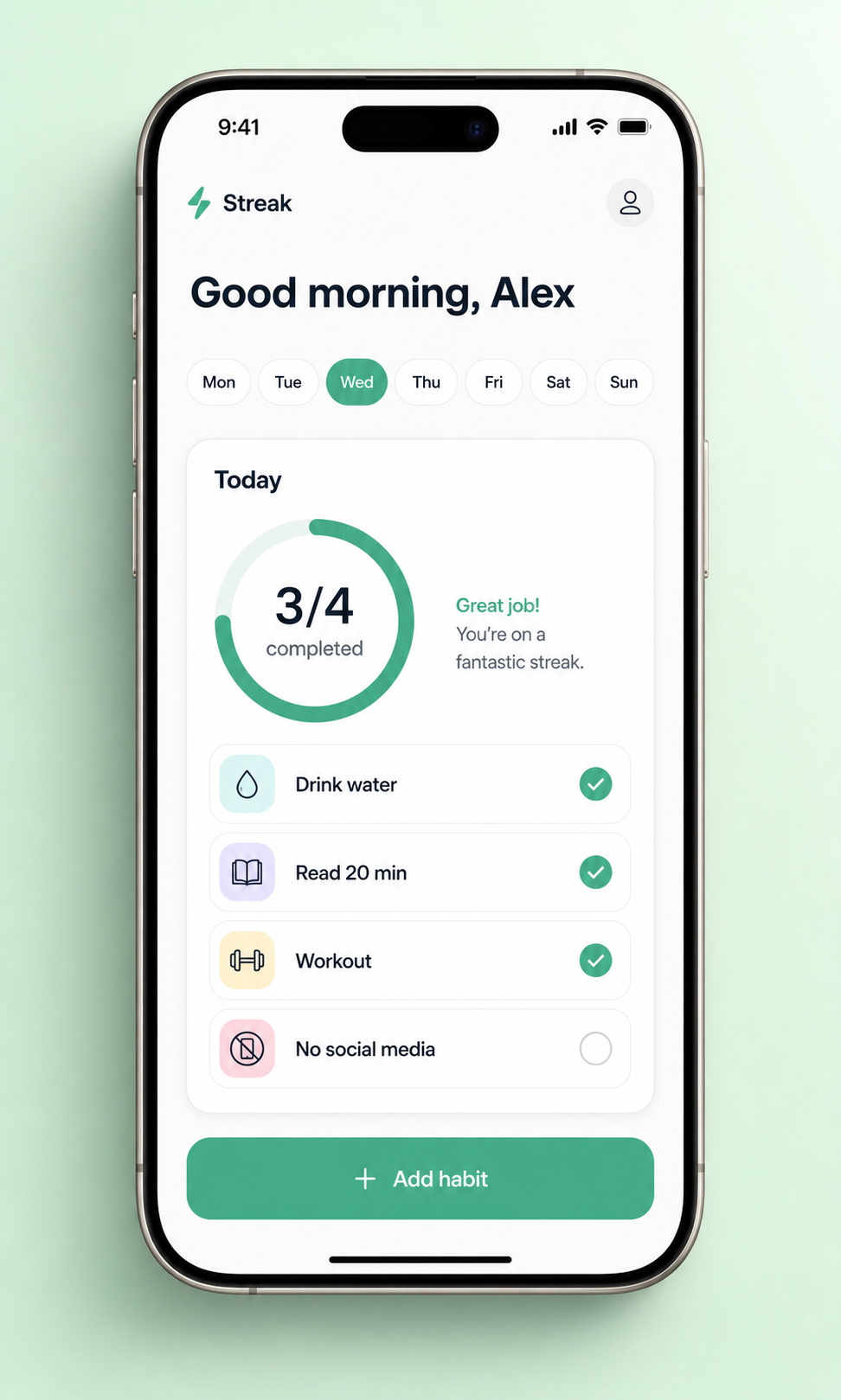

A “Streak” iOS habit-tracker mock inside a current-gen iPhone — one shell call, ~60 seconds on a vanilla MacBook, no Figma involved:

codex-vision generate "Minimalist mobile app screen for a fictional habit-tracker called 'Streak', shown inside a realistic titanium iPhone 15 Pro, viewed straight-on. Clean light theme: greeting, day-of-week pills with today highlighted, a 'Today' card with a circular progress ring (3/4) and 4 habit rows, primary 'Add habit' button at bottom. Mint-green seamless paper backdrop, soft drop shadow. 9:16 phone aspect inside a 4:5 image, App Store screenshot quality." \

--out ~/Desktop/streak-app.png

The same skill in the opposite direction: feed any screenshot to codex-vision review … and it returns a structured drive-by from Codex. The repo’s README walks through five more examples — pricing pages in browser frames, before/after redesigns, architecture diagrams, iMac landing-page heroes, and a 60-second product-design critique on a generated mock.

How it works

flowchart TD

U["User<br/><i>/codex-vision review ui.png</i>"] --> CC["Claude Code<br/><i>gating from SKILL.md</i>"]

CC -->|fire| W["codex-vision.sh wrapper<br/><i>route by mode, add -i</i>"]

CC -.->|skip / clarify| X["codex exec<br/>(text-only)"]

W -->|review| R["codex exec -i <png> '<prompt>'"]

W -->|generate| G["codex exec 'Use image_gen ...'"]

W -->|edit| E["codex exec -i <png> 'Use image_gen to edit ...'"]

R --> CX["OpenAI Codex"]

G --> CX

E --> CX

CX -->|review| VI["functions.view_image"]

CX -->|generate / edit| IG["image_gen.imagegen"]

VI --> CRIT["text critique back to user"]

IG --> PNG["PNG → /tmp/codex-vision-out/<slug>-<ts>.png"]

| Mode | What the wrapper does |

|---|---|

review | codex exec -i <png> "<prompt>" → Codex’s functions.view_image tool |

generate | wraps prompt as "Use the built-in image_gen tool to generate ... Save to <out>" |

edit | codex exec -i <png> + "Use the built-in image_gen tool to edit the attached image: ..." |

The companion script most agents shell through (codex-companion.mjs) silently drops codex exec’s -i flag — without that flag, Codex can’t see images. codex-vision is the thin wrapper that calls codex exec -i directly with predictable session and log paths so Claude doesn’t have to reinvent the plumbing each time.

Does the trigger fire when it should?

Skill description fields are read by autonomous coding agents to decide whether to auto-invoke. Loose phrasing fires on every “screenshot” mention; tight phrasing skips legitimate review asks. Both fail silently. The repo treats SKILL.md as code under test:

-

tests/triggering/cases.yaml— 50 hand-written user prompts (15 fire / 20 skip / 15 clarify), spanning happy paths, “screenshot” used as a verb, stale PNGs in/tmp/, URL-only references, and ambiguous “use codex” phrasing. -

scripts/check-triggering.sh— concatenates the liveSKILL.mdwith every numbered prompt, asks Codex to classify each asFIRE/SKIP/CLARIFY, and fails on any false positive (expectedskip/clarify, gotFIRE) or false negative (expectedfire, gotSKIP/CLARIFY).

{

"responsive": true,

"tooltip": {"trigger": "axis"},

"legend": {"bottom": "0%", "data": ["hard pass (no FP / no FN)", "exact-label agreement"]},

"grid": {"left": "8%", "right": "5%", "top": "10%", "bottom": "20%"},

"xAxis": {"type": "category", "data": ["trial 1", "trial 2", "trial 3"], "axisLabel": {"fontSize": 13}},

"yAxis": {"type": "value", "name": "# cases passing (of 50)", "max": 56, "nameTextStyle": {"fontSize": 13}},

"series": [

{"name": "hard pass (no FP / no FN)", "type": "bar", "data": [50, 50, 50],

"itemStyle": {"color": "#00CC96"}, "barGap": "0%", "barWidth": "32%",

"label": {"show": true, "position": "top", "formatter": "{c}/50", "fontSize": 12, "fontWeight": "bold"}},

{"name": "exact-label agreement", "type": "bar", "data": [46, 46, 46],

"itemStyle": {"color": "#1d8e3c"}, "barWidth": "32%",

"label": {"show": true, "position": "top", "formatter": "{c}/50", "fontSize": 12}}

]

}

SKILL.md v0.3.2 reaches 50 / 50 hard pass across three independent trials, with 46 / 50 exact-label agreement (the four soft mismatches are clarify-expected cases the model labels SKIP instead — acceptable behavior, the skill correctly does not fire). The eval surfaced six concrete defects in earlier description revisions before this one — false positives on edit/generate without explicit Codex intent, “screenshot” matching the verb form (“take a screenshot”), /codex-vision blocked by the image-context requirement, PNG/JPG matching too narrow, and “mock” ambiguous between mockup and mock-data. Each got fixed by tightening one clause in the description and re-running until the bar returned to 50 / 50.

Install variants

npx skills add thenamangoyal/codex-vision -g -a claude-code -y # Claude Code only (default)

npx skills add thenamangoyal/codex-vision -g --all # every coding agent (symlinked)

npx skills add thenamangoyal/codex-vision@v0.3.2 -g -a claude-code -y # pin a release tag

npx skills update codex-vision # update later

Project-scoped install? Drop -g and run inside a repo. Manual clone (no npx skills)? git clone https://github.com/thenamangoyal/codex-vision ~/.claude/skills/codex-vision. Verify with ~/.claude/skills/codex-vision/scripts/codex-vision.sh doctor.

github.com/thenamangoyal/codex-vision · v0.3.2 · MIT